L&D Budget Guide: Models, Cost Categories, and ROI for High-Impact Programs

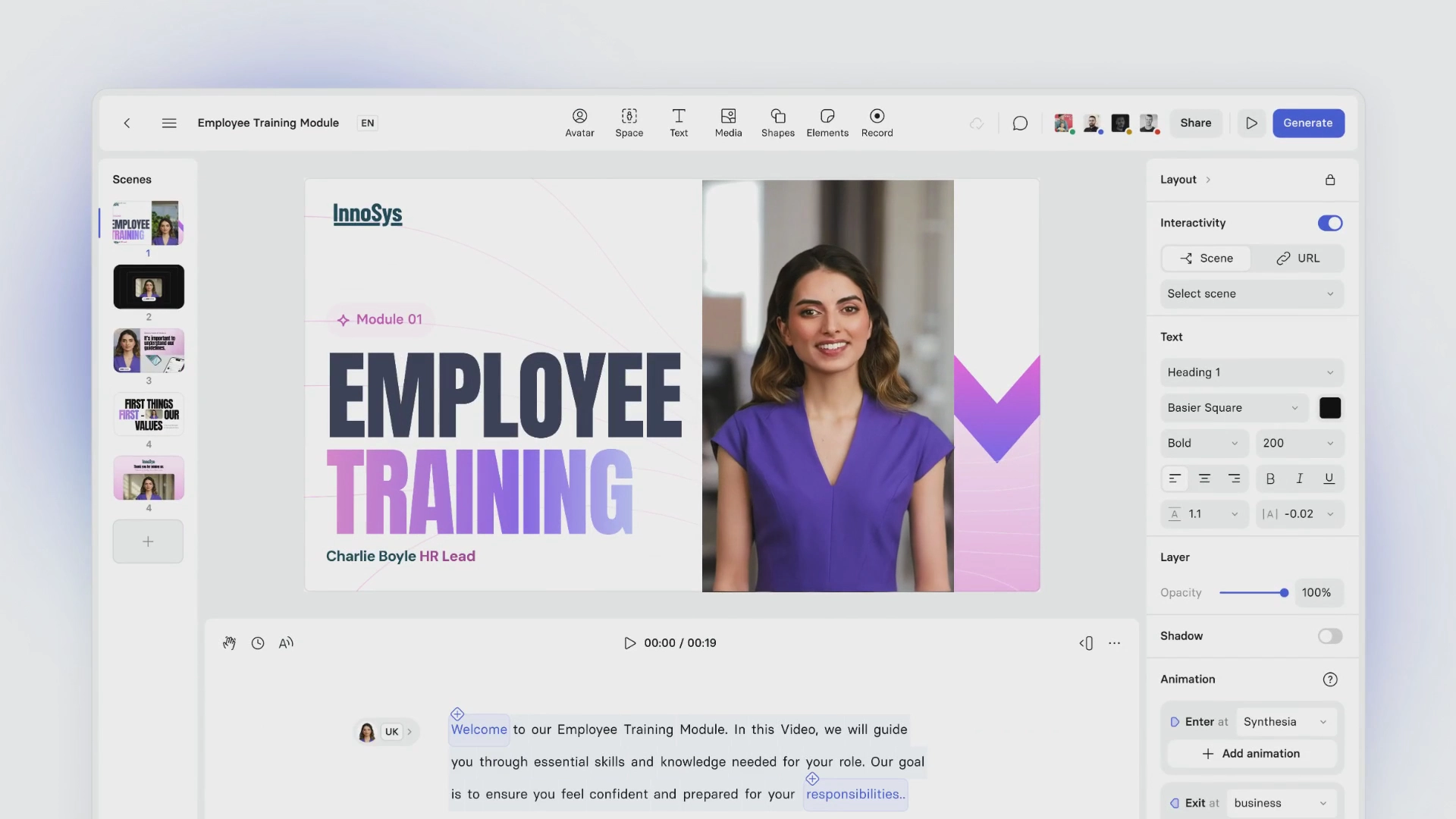

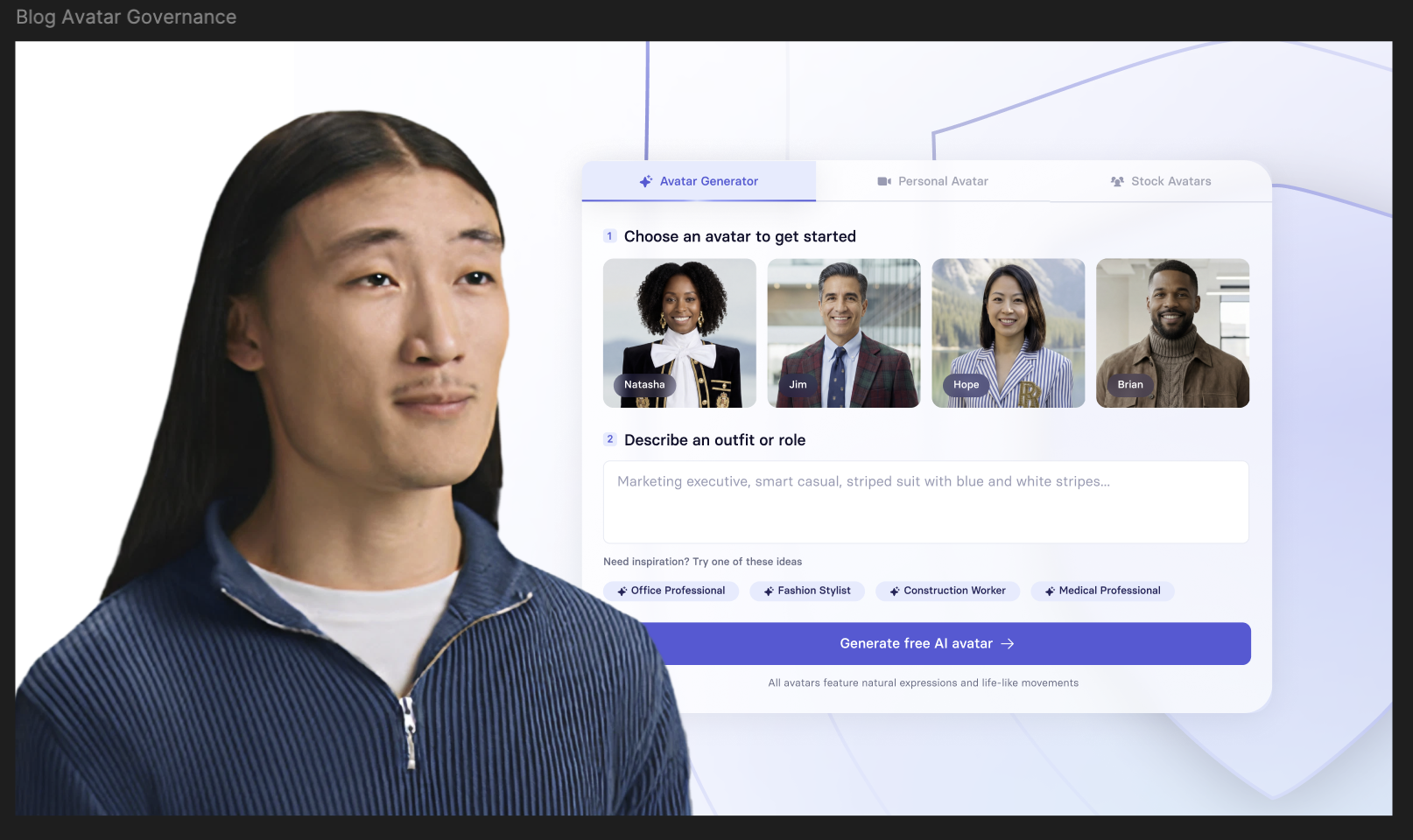

Create engaging training videos in 160+ languages.

L&D budgeting is rarely straightforward. The impact of executive development or onboarding isn’t always directly measurable, so teams often start with proxy indicators and then mature toward cross-functional outcomes like performance, engagement, operational metrics, and sometimes quota.

At the same time, organizations invest an average of $1,254 per employee on direct learning, which is driving more scrutiny on L&D budgets, more pressure to prove business impact, and more urgency to modernize how learning is delivered, including where AI fits.

Whether you’re setting up an L&D cost center for the first time or re-evaluating your current approach, this guide breaks down the most common L&D budgeting models, how to capture true costs, and which measurement approaches to use based on program scope and risk.

Set the foundations

Choose the path below that matches your situation: building from scratch or re-evaluating an existing budget.

Building an L&D budget

Start by asking your Finance partner:

- What fiscal year are we budgeting for, and what’s the planning cycle?

Define when budgets lock, when reforecasts happen, and how approvals flow. - How does Finance want L&D spend categorized and reported?

Clarify how cost centers are structured, how reporting lines work, and where L&D sits. - How are different types of costs classified and approved?

Distinguish which costs count as headcount, which fall under operating expenses, and which require procurement. - What does this mean for speed and flexibility?

Identify what can be changed quickly and what requires a longer runway. - What procurement constraints do we need to design around?

Outline how security reviews, vendor onboarding, purchasing thresholds, and contracts affect timelines.

Re-evaluating an existing L&D budget

Start by mapping where spend actually lives today:

- What’s in the L&D cost center vs distributed across teams?

Vendors, travel, coaching, tools. - Where is there duplication?

Multiple providers, overlapping platforms, inconsistent standards. - Which investments skew your benchmarks?

Executive development centralized for a small audience. - What changed since the last cycle?

Strategy, compliance, reorg, new regions, AI adoption.

💡Tip: Some of the biggest budget drivers aren’t obvious line items. They show up as multipliers: every new audience, region, refresh cycle, or workflow change increases cost unless you plan for reuse and governance.

Choose your L&D budgeting model

Once you’ve aligned with Finance and clarified how spend is classified, choose a budgeting approach that matches how your organization operates. Here are common L&D budgeting models:

- Program-based budgeting

Fund priority programs such as onboarding, compliance, manager essentials, and role academies as a portfolio with defined scope, owners, and success measures. - Cost-per-head allocation

Set a standard investment per employee or percentage of payroll to fund shared capability building and simplify forecasting. - Decentralized team budgets

Business units or functions fund role-specific learning, while central L&D sets standards, governs vendors, and tracks outcomes. - Individual learning stipends / allowances

Employees receive an annual learning budget with clear guardrails around eligible categories, approvals, and reimbursement rules. - Showback / chargeback

L&D runs as a shared service with a catalog. Costs are billed back or made visible based on usage. - Central infrastructure with distributed delivery ops

Central teams fund platforms and reusable assets, while regions and teams cover variable delivery costs like travel, rooms, catering, and materials.

Hybrid models are often the most realistic option at scale. The goal is to combine predictable funding for enterprise priorities with flexibility for role- and team-specific needs.

💡Tip: Define what’s centralized vs team-owned, and why. Centralize for consistency and scale; decentralize for role and business-specific needs.

Apply your model

Once you’ve chosen a model (or mix), translate it into structural decisions. This is what makes program budgets easier to build, easier to explain to Finance, and easier to measure over time.

If you’re building from scratch, decide:

- What stays centralized, including platforms, core programs, measurement standards, and shared content production

- What stays local, including role-specific learning, team budgets, conferences, and some delivery operations

- How programs will be budgeted so spend and outcomes stay comparable

If you’re re-evaluating, focus on:

- Consolidating where governance and scale matter, including platforms, workflows, vendor standards, and measurement

- Shifting variable spend closer to the business with clear guardrails, lightweight approvals, and escalation paths

- Separating enterprise-wide programs from high-cost, limited-audience initiatives

Budgeting for headcount and capacity

L&D budgets shape how much work your team can actually deliver. That includes both ongoing programs and the capacity behind them.

Some work needs to run continuously. Other work expands and contracts depending on demand. It’s worth separating the two early.

A useful starting point: what needs to be consistently available, and what can flex?

Ongoing work tends to include onboarding, compliance updates, manager enablement, core role readiness, and localization.

More variable work shows up as facilitation spikes, custom workshops, redesign efforts, and event-driven learning.

Not every capability has to sit in-house. What matters is having reliable coverage across program ownership, design, production, operations, measurement, and facilitation.

💡Tip: Separate “run-the-business” capacity (keep programs operating and updated) from “change-the-business” capacity (new builds, transformations, redesigns).

Measuring impact

A big part of building an L&D budget is funding measurement so you can show what’s working and where to adjust. Not every initiative needs a full ROI study, but your measurement approach should scale with program scope and risk — credible enough to demonstrate impact, light enough to keep delivery moving.

Baseline metrics

For most programs, the goal isn’t to “prove ROI.” It’s to measure the signals that predict transfer (whether people apply what they learned at work) and create feedback loops that improve the experience over time.

Research on training transfer consistently shows outcomes depend on more than the training itself, including learner factors and the work environment.

- Access and completion

Track reach, completion, and time to complete so you know what was actually used. These metrics show coverage, but they don’t tell you much about impact. - Learning checks that reflect retention

Use short retrieval-based checks spaced over time rather than one-off quizzes. This approach is more likely to reflect what people retain. - Early signals of transfer

Run a short follow-up pulse 2–4 weeks later. Ask whether the learning has been used and what got in the way. Add manager input where possible to strengthen the signal. - In-flow usage of performance support

When learning is embedded in the workflow through job aids, checklists, or searchable guidance, track how often it’s used and adopted on the job. - Operational indicators linked to outcomes

Select one or two leading indicators tied to the role, such as quality, cycle time, error rates, adherence, or resolution time. These are often the clearest link between learning and business performance.

💡 Tip: For broad programs, combine these signals with a small set of real examples — both strong outcomes and weaker ones — to understand what drove impact and what held it back.

Decision-grade evaluation

For higher-cost, higher-visibility programs, baseline metrics aren’t enough.

The goal is to show a credible chain from learning → behavior → business outcome, using methods that are realistic in enterprise environments and clear about assumptions.

- Define the outcome and the decision it supports

Be specific about what should change in the business, such as time to proficiency, error rates, quality, cycle time, or quota attainment. Clarify what leaders will do with the result, whether that’s scaling, redesigning, stopping, or increasing investment. - Measure behavior and transfer

Plan a structured follow-up at the right intervals, often 30, 60, or 90 days. Combine learner input with manager confirmation or evidence from the workflow. - Estimate the program’s contribution

Use the strongest approach available, such as pilot and comparison groups, staggered rollouts, or pre and post analysis with controls. When that isn’t possible, document assumptions and apply a level of confidence to your estimate. - Translate impact into value

Apply a consistent cost model that includes vendor and tooling costs, internal labor, SME time, and learner time so the analysis reflects the full investment. - Report results in a Finance-ready format

Be clear about what was included, what was excluded, and the time horizon. A transparent range builds more trust than a precise number based on hidden assumptions.

Use your tech stack as a budgeting lever

We recommend making smart, intentional decisions about tech investment. Many L&D teams benefit from starting with the tools people already use day to day, then embedding learning where work happens. From there, if you do add to your stack, prioritize technology that helps you rein in spend and drive business impact.

A few principles to guide decisions:

- Embed learning in the flow of work

Deliver guidance inside the tools people already use, such as collaboration platforms, knowledge bases, ticketing systems, and CRM. This reduces friction and increases application. - Lower the cost of creating and updating content

Prioritize tools that make it faster to produce, refresh, and reuse training. Frequent updates shouldn’t require new vendor projects each time. - Scale without fragmentation

Use systems that support localization and version control, so global programs stay consistent as they expand. - Build governance into the system

Look for templates, brand controls, permissions, and approval workflows that maintain quality and reduce duplication. - Make measurement part of the workflow

Connect engagement and usage data to program goals. Use it to identify drop-off points, confusion, and where reinforcement is needed. - Protect team capacity

Invest in workflows that allow a lean team to produce, maintain, and iterate efficiently, especially for onboarding, compliance, and process change.

Tech is often what makes blended models workable in practice: it lets you centralize standards, governance, and measurement while still letting teams move quickly on role- and context-specific learning.

Remember, building an L&D budget isn’t about defending a number — it’s about designing a system that consistently produces measurable impact.

Amy Vidor, PhD is a Learning & Development Evangelist at Synthesia, where she researches learning trends and helps organizations apply AI at scale. With 15 years of experience, she has advised companies, governments, and universities on skills.

Frequently asked questions

What should be included in an L&D budget?

How much should you budget per employee for learning and development?

What are the most common L&D budget models?

What’s the difference between an L&D budget and an L&D cost center?

How do you calculate the true cost of a training program?

When should you use the Phillips ROI method for L&D?

How do you justify an L&D budget to Finance?

cUse a clear cost taxonomy, define unit-cost metrics (cost per completion, learning hour, proficiency), and connect priority programs to measurable outcomes like time-to-proficiency, quality, productivity, or revenue.

.png)